In our 4-part series highlighting Onfido products and their development, we’re exploring why collaborative development creates customer-centric products, exploring some of our products and their stories, and in this article, taking a deep dive into one case study: Onfido Watchlist.

The Watchlist problem

Watchlist screening is a global regulatory requirement for businesses to meet KYC (Know Your Customer) and AML (Anti-Money Laundering) laws.

When regulated financial services businesses onboard end users, they have to be confident that those users aren’t sanctioned, politically exposed (for example Boris Johnson at one end of the spectrum, or the mayor of a small French village at the other), associated with certain types of crime, or on any other naughty lists.

So they have to screen thousands of sources to get that confidence – and armed with very little end user data: at most a name, data of birth and country of residence. That’s not a lot to go on!

A very human problem

This presents a very costly human problem. Our clients employ big teams of operations people, whose painful job it is to look through long lists of bad guys (who may or may not be their end user) and decide whether or not to onboard.

To make life more complicated, they’re almost always using multiple systems to complete this process: Onfido’s dashboard, their own back office system, and sometimes even another partner system in the mix.

The primary pain point

These operations analysts have a primary pain point, which is inherent in all AML screening products: false positives.

A false positive is a “match” which shares some attributes with their end user, like first and last names, but isn’t their end user. Being able to tell the difference quickly and confidently between these false positives and actual matches can have an enormous impact on a company’s onboarding rate.

And just as we don’t have many attributes to use when we’re screening sources, the same applies to our client’s analysts – they have at most a name, data of birth and country of residence at their disposal to work out what is and isn’t a match.

In this blog, we’ll explore how we partnered with our clients to help them with this problem.

Watchlist user interface MVP

Let’s first take a look at our Watchlist product a few months back, when it was more of an Minimum Viable Product (MVP) – a state that we still believe is critical for fast feedback and high quality insights from clients.

Inside the Watchlist check, we can see the overall report result - in this case it’s ‘Consider,’ indicating that there’s something the client needs to investigate. Below the result we have the report “breakdowns,” the results of which contribute to that overall recommendation. So far so good.

But after that, things get a bit tricky… the analyst is presented with a list of matches and match data all on one page. There’s plenty of data about the match, but it’s not really clear what it all means.

We also can’t see on this page any information about our end user to compare against the match data, so it would be impossible for an analyst to tell at a glance whether this is a match, a false positive, or requires further investigation.

It was clear that this UI wasn’t solving the problem.

The discovery process

It was also clear that to solve the problem effectively, we needed to approach it hand-in-hand with our clients.

Finding the right clients

We defined some simple criteria to help us recruit the right clients to partner with us on a discovery project.

- They should be actively feeling the pain points right now. We wouldn’t learn much by asking happy clients what they’d like us to tweak - we needed to find clients who were hurting and ask them to work with us to fix this together.

- They should be mid-market clients. We needed to talk to the businesses most likely to use the Onfido dashboard to review results, not the enterprise clients who are more likely to just consume everything via API and pump it into their own complex back office systems.

- Finally, they should represent a range of sub-verticals within financial services - because different sub-verticals like crypto, trading platforms, and fintech challenger banks all have differing risk appetites and processes. Our job is to spot patterns that apply to all of them, then build what’s generalisable.

We leveraged our existing strong client relationships to recruit clients that fit the bill, and got to work interviewing.

Interviews

For this project, the personas we were speaking to tended to be a mix of product, compliance and operations people, who all touch the Watchlist problem at different points:

- product people need to be confident that they can integrate us into their own systems and processes

- compliance people need to know that we’re going to keep them on the right side of the regulator

- and the operations people are usually our day-to-day Watchlist UI users

We wrote and used a detailed discussion guide for each interview to make sure we got the insights we needed by asking the right open questions. The high-level goals were to:

- get a deep understanding of our clients’ end-to-end manual review process (both inside and outside our product)

- validate our existing hypotheses and assumptions

- understand to what extent we’d already alleviated pain points

- discover new problems that needed solving that we hadn’t even considered yet

Our initial interviews were the first pass towards achieving those goals.

Shadowing

The next step took us deeper – shadowing the operations team as they undertook the manual review process. This is where the very richest insights come from during discovery – observing pain points first hand is infinitely more valuable than just hearing about them. When a client or a user tells us what they want (or better yet, what they need), it’s definitely useful, but it’s coming through a lens, there’s inevitable bias, and it doesn’t let us into the root of the problem. But when someone shows us what they do day-in day-out, that’s when the light bulb goes on, we can really empathize, and we get that deep understanding of the job to be done.

While we listened and watched, we were disciplined in being super objective. We took reams of notes, but they were always either direct observations or quotes - they weren’t opinions or inferences or solutions.

We undertook this process several times with various clients, then we took away what we’d learned and synthesized this rich information into overarching themes and more granular categories.

Design and build

Next it was time to design! We got designs in front of our discovery cohort before we went any further, but it’s important to stress that this stage wasn’t sign-off or approval (we’re not an agency). It was about getting a sense as early as possible in the process of the degree to which we were going to move the needle for our clients with our enhancements.

Finally, we entered the build-measure-learn cycle that we all know and love - iterating rapidly with a weekly release cadence, then shadowing again to get more rich qualitative data in, synthesizing our learnings, and repeating the cycle.

Enhanced Watchlist user interface

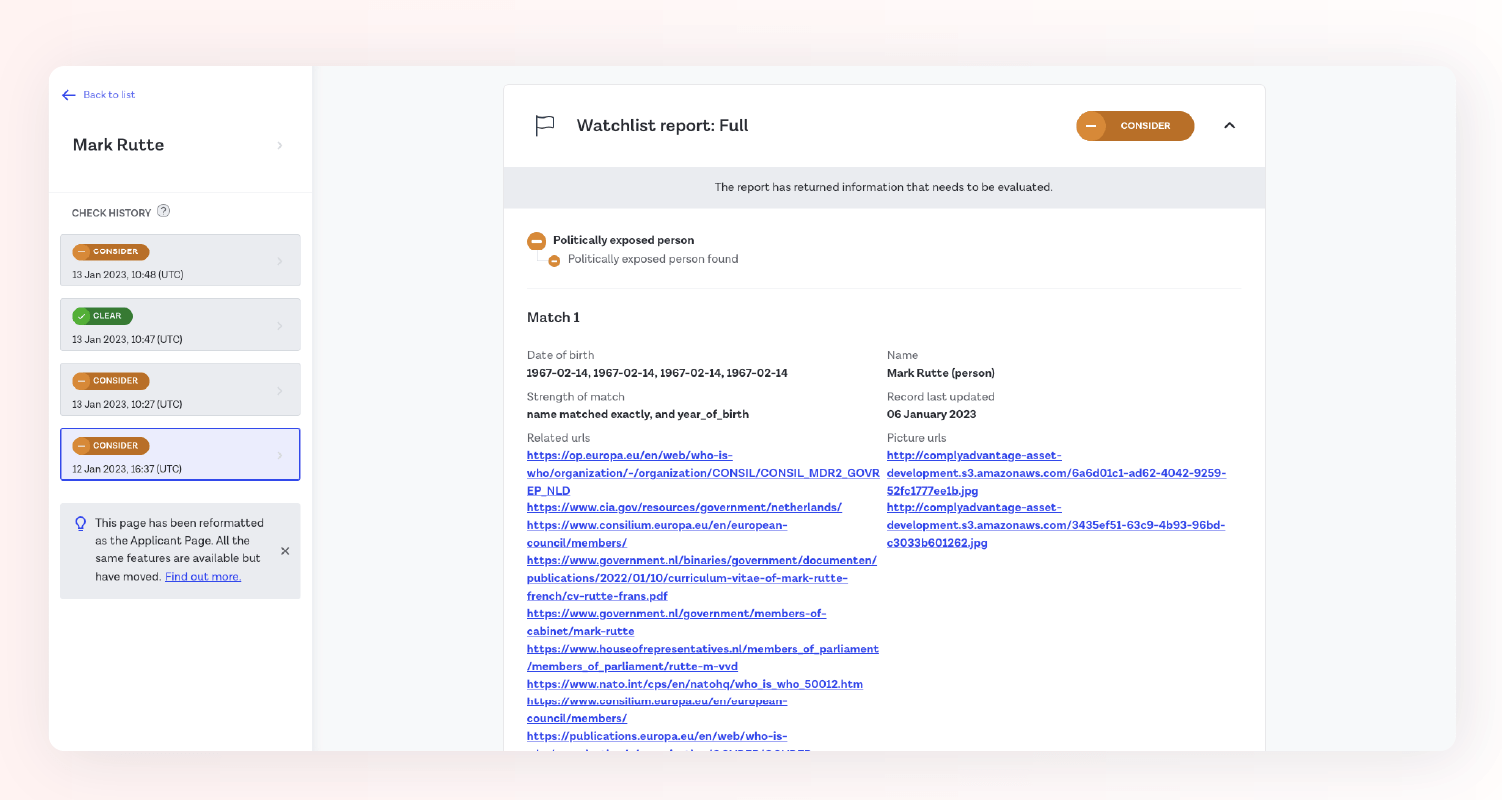

Now let’s look at our Watchlist UI now, after collaborating with and learning from our clients. Remember that the overarching job to be done is identifying matches and false positives quickly and accurately.

Here’s our Watchlist check again, with enhancements designed to reduce that operational burden:

- At match list level, we’re now exposing more data – you can see the match name, match type, date of birth, and nationality (all data points that we know from our clients are key to helping operations analysts identify false positives at a glance)

- We’re displaying the end user data right above the match data, for quick comparison – analysts no longer need to navigate to another page for that, a painful journey we observed during shadowing

- Analysts can also now filter by match type, so they can cut out the noise and just review the category that’s highest priority to them

- End user data is now displayed at match level as well, because we never want to force analysts to go backwards to compare data

- There’s now a photo of our end user alongside a photo of the apparent match - doesn’t look like this is our user!

- There’s a lot more match information than we were returning before, but it’s been restructured much more clearly into sections to help analysts review according to match category

- We’re also now expanding adverse media URLs and including lead text, to make it faster to check if an article is about an end user

- Matches are now ranked by a scoring system according to the factors we know matter most to our clients

We’re by no means done – discovery and delivery is a never-ending process! We know that there’s plenty more to do to ensure that Onfido can be a one-stop-shop for Watchlist manual review. But we didn’t want to wait for full case management to start alleviating some of our clients’ operational burden – working this closely with clients means we need to release rapid and incremental improvements.

Discover the features and benefits of Onfido's market-leading identity verification products in our interactive tour, including a demo of Studio workflow builder.